Meta announced today that it will roll out new direct messaging restrictions on Facebook and Instagram for teens that prevent anyone from messaging teens.

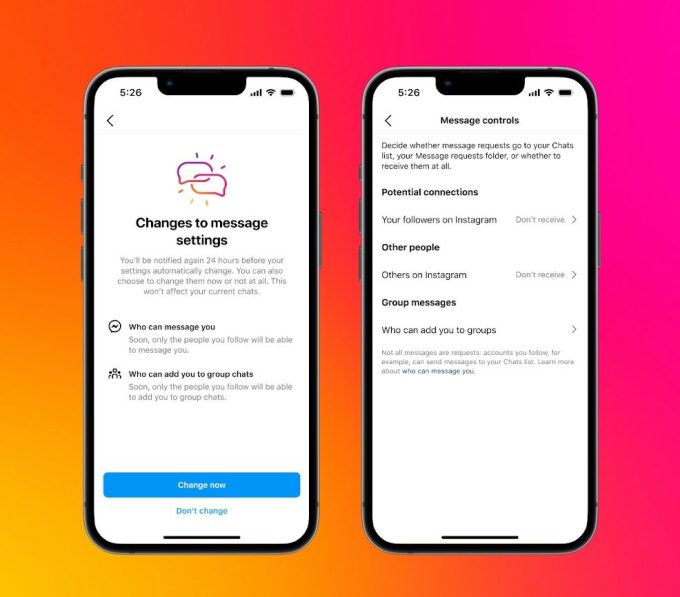

Until now, Instagram restricts adults over 18 from messaging teens they don’t follow. The new limits will apply to all users under 16 — and in some geographies under 18 — by default. Meta said it will notify existing users with a notice.

Image credits: dead

In Messenger, users will only get messages from friends on Facebook, or people in their contacts.

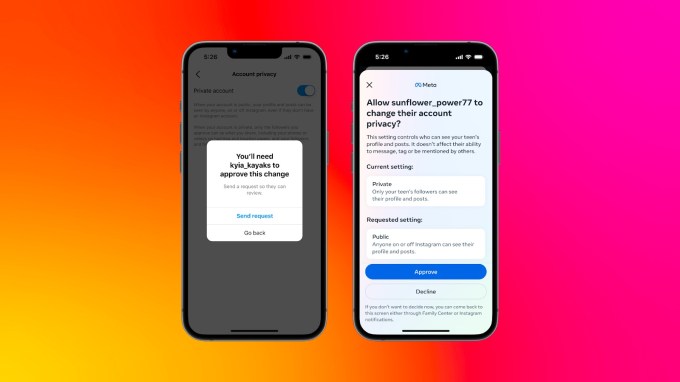

Furthermore, Meta is also making its parental controls more robust by allowing guardians to allow or deny changes to default privacy settings made by teens. Previously, when teens changed these settings, guardians would receive a notification, but couldn’t take any action on it.

The company gave the example that if a teenage user tried to make their account public instead of private, changed the sensitive content control from “Less” to “Standard,” or tried to change the controls around who could direct message them, guardians could block them.

Image credits: dead

Meta first rolled out parental supervision tools for Instagram in 2022, giving guardians insight into their teens’ use.

The social media giant said it is also planning to launch a feature that will prevent teens from seeing unwanted and inappropriate photos in their direct messages sent by people they are connected to. The company added that this feature will work in end-to-end encrypted chats as well and will “discourage” teens from sending these types of images.

Meta did not specify the work it is doing to ensure teens’ privacy while implementing these features. She also did not provide details about what she considered “inappropriate.”

Earlier this month, Meta rolled out new tools to restrict teens from looking at self-harm or eating disorders on Facebook and Instagram.

Last month, Meta received a formal request for information from EU regulators, which asked the company to provide more details about the company’s efforts to prevent the sharing of self-generated child sexual abuse material (SG-CSAM).

Meanwhile, the company is facing… Civil action in New Mexico state court, alleging that the Meta social network promoted sexual content to teenage users and promoted underage accounts to scammers. In October, more than 40 US states filed a lawsuit in a federal court in California accusing the company of designing products in a way that harms children’s mental health.

The company is scheduled to testify before the Senate on issues related to child safety on January 31 of this year along with other social media networks including TikTok, Snap, Discord and X (formerly Twitter).