WASHINGTON – Subcommittee on Cybersecurity, Information Technology, and Intelligence

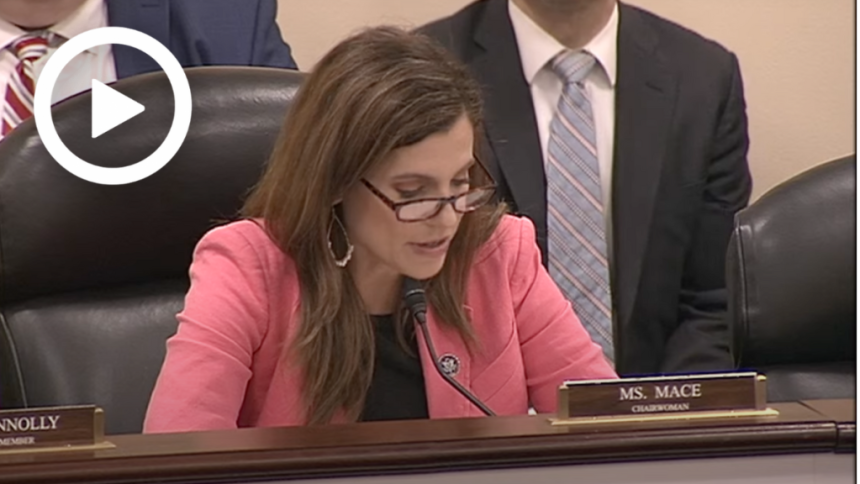

Nancy Mace, R.S.C., Chair of the Government Innovation Committee, gave opening remarks at the hearing titled “How Are Federal Agencies Leveraging Artificial Intelligence?” In his opening statement, Subcommittee Chairman Mace emphasized that the federal government, as the nation’s largest employer, must prepare for the widespread impact of AI. She went on to warn that like any tool, AI can be used for the wrong purposes or abused without proper safeguards. She concluded by highlighting legislation that will enable federal agencies to use AI systems effectively, safely, and transparently.

The following is Subcommittee Chairman Mace’s statement in preparation for distribution.

Hello. Welcome to the Subcommittee hearing on Cybersecurity, Information Technology, and Government Innovation.

At the subcommittee’s first hearing this year, expert witnesses said artificial intelligence has the potential to bring disruptive innovation to many fields. AI should drive economic growth, higher living standards, and improved medical outcomes. Almost every industry and institution will feel the impact.

Today we’ll discuss the impact of AI on the federal government, the nation’s largest and most powerful institution.

As you know, governments today carry out an ever-expanding array of activities, from securing land to predicting the weather to cutting benefits. Many of these capabilities can be significantly impacted by AI. This is evident in the hundreds of current and potential AI use cases that federal agencies have released pursuant to executive orders issued under the previous administration.

Federal agencies are trying to use AI systems to strengthen border security, make air travel safer, and speed up eligibility determinations for Social Security disability benefits. Here are some usage examples.

AI will also revolutionize the federal workforce itself. We often hear about how AI will disrupt the private sector workforce, transforming or eliminating some jobs while potentially creating others. Well, the federal government is the nation’s largest employer. And while many of those employees are in white-collar jobs, AI is already changing that as it can perform many routine tasks more efficiently than humans. This allows federal employees to focus on higher-level tasks that maximize productivity.

In fact, Deloitte research estimates that using AI to automate federal employee tasks could reduce required labor hours and ultimately result in savings of as much as $41 billion annually. . A separate study by the Partnership for Public Service and the IBM Center for the Business of Government identified 130,000 federal employee positions likely to be impacted by AI, including IRS tax examiners. and 20,000 tax officials.

Of course, the question arises: Why hire tens of thousands of new IRS employees when AI has the potential to transform or even replace many of the jobs of current staff?

AI can make government jobs better. But while incredibly powerful, it’s still just a tool. And like any tool, it can be easily exploited if used for the wrong purpose or without proper safeguards.

AI systems are often powered by large amounts of training data flowing through complex algorithms. These algorithms can produce results that the designers themselves cannot predict and that are difficult to explain.

Therefore, it is important to have safeguards in place to prevent the federal government from exercising improper bias. We also need to ensure that the federal government’s use of AI does not violate the privacy rights of its own citizens.

The bottom line is that governments need to protect themselves from these potential dangers while leveraging AI to improve their operations.

To that end, Congress enacted the AI in Government Act in late December 2020, just before the current administration took office. The law requires the Office of Management and Budget to issue guidance to government agencies on the acquisition and use of AI systems. The Office of Personnel Management is also tasked with assessing the federal government’s AI talent needs.

However, the government has been slow to comply with this law. OMB is currently more than two years behind schedule in issuing guidance to agencies. OPM is also more than a year behind in determining how many federal employees have AI skills and how many federal employees need to be hired or trained.

The government’s failure to comply with these legal obligations is criticized in a lengthy white paper published by the Stanford AI Research Institute. The authors also found that many government agencies do not list the required AI use cases. Some companies omitted key use cases, such as DHS, which omitted a critical facial recognition system. The Stanford paper summarizes the government’s non-compliance with various obligations and concludes that “the U.S. AI innovation ecosystem is threatened by weak and inconsistent implementation of these legal requirements.”

Most discussions about AI policy focus on how the federal government should police the use of AI by the private sector. But the executive branch cannot afford to lose focus on getting its own house in order. You must properly manage the use of your AI systems in accordance with the law.

This subcommittee will continue to advocate for governments to enforce laws designed to protect government uses of AI.

And I’m pushing for further legislation to help federal agencies use AI systems effectively, securely, and transparently.

We hope this hearing will help inform these efforts.

With that, I turn to the Ranking Member of the Subcommittee, Mr. Connolly.