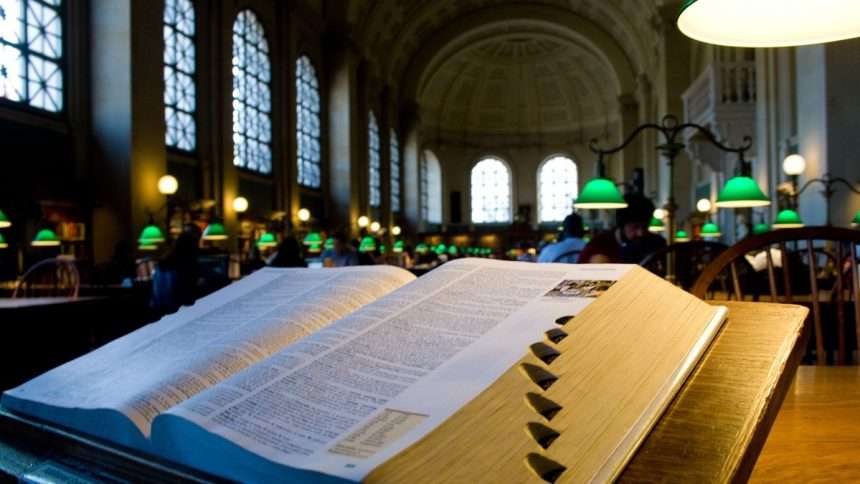

Few would disagree that the year 2023, in the world of technology at least, will be dominated by artificial intelligence. Dictionaries have taken notice in their “Word of the Year” lists, and in particular, that all the AI-related words they highlight are actually existing words that have been appropriated and given new meanings. A little on the nose, isn’t it?

The Cambridge word is “hallucination.” It is, of course, the habit of generative AI models like ChatGPT to invent anything from dates to entire people rather than admit they don’t know. The problem is that these systems don’t know what they don’t know, because they don’t know anything at all.

As complex word prediction models, all that matters is that they produce a sentence that resembles their training data. If you ask him about famous German surgeons of the 18th century and he doesn’t have an exact match, he’ll simply hallucinate something close, like Armand Werdiger of the Einschloss Research Hospital in Tullingen. See, I can do it too! All that matters is that it seems reasonable. Unfortunately, these hallucinations are stated with such certainty that countless have been accepted as real without a doubt.

Hallucinations can be put to good use, though: the images and generative sound are completely and intentionally “hallucinogenic” in that they are a mixture of the model training data but not an exact reproduction of any of it (although they can come very close). This also has its risks, as artworks and images of different quality produced by AI are widespread in many contexts.

Henry Shevlin, an AI ethics expert at Cambridge, said the word’s acceptance despite its original limitation to human cognition “underscores our willingness to attribute human-like traits to artificial intelligence.” “As this decade progresses, I expect our psychological vocabulary to expand to include the strange capabilities of the new intelligences we are creating.”

Merriam-Webster They grabbed the other end of the stick by choosing “authentic” as their word of the year. “With the advent of artificial intelligence—and its impact on fake videos, actor contracts, academic honesty, and a host of other topics—the line between ‘real’ and ‘fake’ has become increasingly blurred.”

While the word “authentic” didn’t get a completely new definition, it did get a new and important connotation. For years we have worried about whether something we or others do is real or not. Authenticity is a paradox of modern consumerism: it cannot be bought or sold, and as such is perhaps the most valuable and marketable quality in the world.

Previously, we had to worry about whether a trend or item represented the true interests and choices of a person or group. Now we have to wonder if the thing was real in the first place, like the Pope’s gorgeous Balenciaga puff.

“Deepfake” also made it to MW’s longlist, graduating (either fortunately or unfortunately) from a niche technique for revenge porn to a general-purpose term for generative artificial intelligence. Its precedents may not be respectable, but we cannot choose what is at its peak.

for example, Oxford Word of the Year – Which would have been much better for this article if it were related to artificial intelligence, but unfortunately the term artificial intelligence was put in second place. The word “mentor”, a versatile and underused word, has gained another definition with its now well-known meaning related to the human side of generative AI.

Image credits: Oxford University Press

When you ask an AI system to compile a list of article ideas based on the current weather, you introduce a “prompt,” and indeed the word has quickly become a verb, and one now “prompts” the system.

Of course these are well-suited extensions to existing router definitions. We have been urged to respond for centuries. As a noun, the use of “prompt” was originally reversed in computer interfaces: a command-line prompt itself was a prompt for a human to respond. So here we have an interesting reversal. Who’s paying who – or what? Whether this strengthens or weakens the word is a matter of taste.

If you’re wondering what the actual word for this year’s Oxford is, it’s “rizz”, which is a fun shorthand for “charisma” and something that AI, like Tom Holland, arguably lacks completely.

It was inevitable that AI terms would creep into the lexicon, although I’m a bit sad that cooler terms like “latent space” haven’t entered general use yet. However, technology moves fast enough that it makes better sense to stick with what is well-established, as evidenced by the judgment exercised by my colleagues, as I like to think of them, in the world of lexicography. However, we’re in for more words this year, as bolder dictionary content teams consider whether vectors and embeddings are also worth supporting.