AI can complement IT security teams in APAC. (Source – Kaspersky)

- AI can help fill the cybersecurity talent gap in APAC by supporting threat intelligence and incident response.

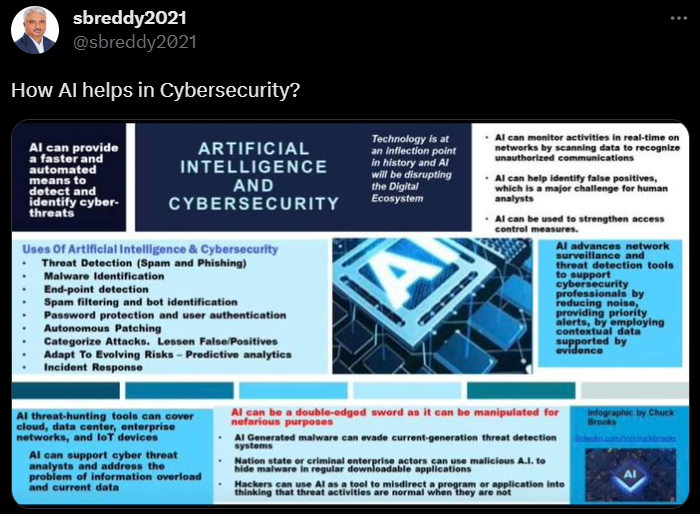

- AI is a double-edged sword in the APAC cybersecurity landscape.

- It can strengthen a company’s cyber defenses, but it also comes with the risk of misuse.

In an era marked by increasing digitalization and the prevalence of cyber threats, the Asia-Pacific (APAC) region faces the critical challenge of a significant shortage of cybersecurity talent. With the rapid advancement of malicious technology, innovative solutions to strengthen cyber defenses are urgently needed.

As of 2022, there is a shortage of 2.1 million cybersecurity professionals in the Asia Pacific (APAC) region. Kaspersky Lab specialists took a closer look at how cybersecurity teams are leveraging artificial intelligence (AI) to strengthen existing protections against rapidly changing cyber threats in the region.

Saurabh Sharma, senior security researcher at Kaspersky Lab’s Asia-Pacific Global Research and Analysis Team (GReAT), said that while cybercriminals could use AI for nefarious purposes, cybersecurity departments could benefit as well. He pointed out that this technology can be used for various purposes.

AI and Cybersecurity in APAC’s Digital Economy

In 2022, the APAC region faces a 52.4% shortage of cybersecurity talent, posing a critical challenge in driving the digital economy. Specifically, Singapore saw its cybersecurity workforce decrease by 16.5% to a total of 77,425 people, making it one of only two markets where the workforce decreased.

The global gap in cybersecurity talent increased by 26.2% to 3.42 million people. Asia-Pacific has the highest shortage, followed by Latin America with a shortage of 515,879 professionals and North America with a need of 436,080 professionals.

In Asia Pacific, 60% of survey participants admitted that there is a significant shortage of cybersecurity staff within their organizations. Additionally, 56% said this talent gap puts their organization at moderate or high risk of cyberattacks.

The urgent need for AI in cybersecurity

“This urgent need is driving IT security teams to consider the use of smart machines to strengthen their organizations’ cyber defenses. AI can help in critical areas such as threat intelligence, incident response, and threat analysis. ,” said Sharma.

The urgent need for AI in cybersecurity. (Source – Shutterstock)

Threat intelligence in cybersecurity involves automating and enhancing multiple steps to collect, vet, and share information about threats. These include:

- Threat hunting: AI helps proactively identify threats that are not yet commonly known. This technology helps cybersecurity experts discover new attack methods and weaknesses by studying anomalous behavior.

- Malware analysis: AI-powered tools automatically analyze malware samples and recognize their behavior, functionality, and possible consequences. This makes it easier to understand the purpose of the malware and the best strategies to mitigate it.

- Real-time threat detection: AI-based security solutions allow you to monitor network traffic, activity logs, and overall system behavior in real-time. Such systems can detect unusual or suspicious behavior that may be a sign of an ongoing cyber attack.

Sharma said AI algorithms can quickly sift through and evaluate past research and historical tactics, techniques, and procedures (TTPs), potentially leading to the formulation of hypotheses for threat hunting. I pointed out that there is.

Kaspersky experts further added that in the field of cyber incident response, AI can point out irregularities in provided logs, interpret certain security event logs, and determine how certain security event logs appear. We hypothesized that this could provide guidance for identifying the initial point of compromise. web shell etc.

Regarding threat analysis (the stage in which cybersecurity experts take a deep dive into how the tools used in an attack work), Sharma said technologies like ChatGPT can help identify key elements of malware, obfuscated scripts, and more. We have observed that it is also useful for establishing decoy web servers with certain encryption methods. .

How AI can help with cybersecurity. (Source – X)

Double-edged sword: ChatGPT and prompt engineering

How can I achieve all this? As CSO online report, recent experiments demonstrated that a seemingly innocuous executable can be designed to initiate API calls to ChatGPT every time it is executed. Rather than simply replicating an existing code sample, ChatGPT can be directed to create versions of malicious code that vary and evolve with each invocation, making the task of detecting cybersecurity mechanisms easier. It gets complicated.

ChatGPT, like other large-scale language models (LLMs), has content filters designed to prevent requests that create harmful content, such as malicious code. However, these content filters are not foolproof and can be circumvented.

Nearly all known potential exploits related to ChatGPT are currently performed using a technique known as “prompt engineering.” This involves modifying the input prompt to bypass the tool’s built-in content filter and thereby obtain the intended output.

Early users discovered that they could essentially “jailbreak” ChatGPT by framing their queries as hypothetical situations. For example, by asking a program to perform actions as if it were a malicious individual rather than an AI, they could create malicious content.

The ability to trick ChatGPT into accessing internal knowledge restricted by filters allows users to trick ChatGPT into writing powerful malicious code. This can be further optimized to generate polymorphic code by leveraging the model’s capabilities to adjust and refine the output of the same prompt when executed multiple times.

Nevertheless, Sharma pointed out the limitations of AI in building and maintaining cybersecurity measures. He gave the following advice to APAC companies and organizations:

- Prioritize strengthening your current team and processes.

- We confirm that transparency is essential in the deployment and use of Generative AI, especially when it involves incorrect data.

- Maintain a comprehensive record of all engagement with Generative AI, make it accessible for scrutiny, and preserve it throughout the life of the product integrated into your enterprise systems.

In conclusion, Mr. Sharma said: “AI offers clear benefits for cybersecurity teams, especially when it comes to automating data collection, improving mean time to resolution (MTTR), and limiting the impact of incidents. Effectively leveraging this technology, security analysts However, organizations must remember that while smart machines can augment and complement human talent, they cannot replace it.”