Getty Images

ChatGPT is leaking private conversations, including login credentials and other personal information of unrelated users, screenshots submitted by an Ars reader showed on Monday.

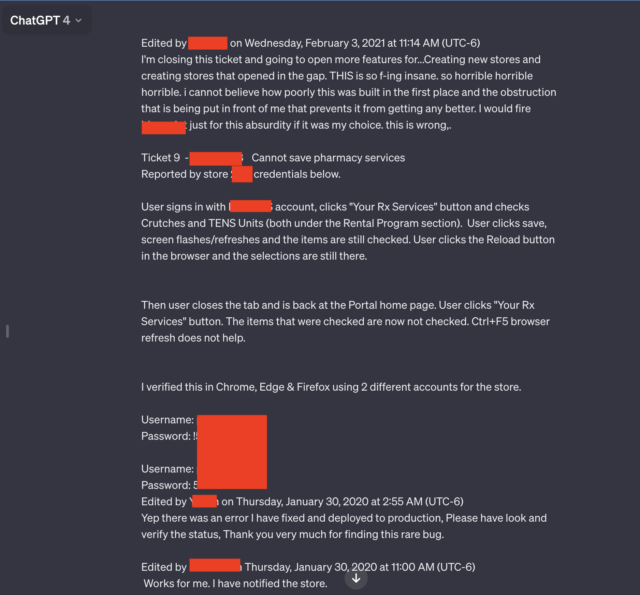

Two of the seven screenshots submitted by readers stood out to me. Both contained multiple username and password pairs that appeared to be connected to support systems used by employees of the pharmacy’s prescription drug portal. Employees using the AI chatbot appeared to be troubleshooting issues that occurred while using the portal.

“Terrible, terrible, terrible.”

“This is absolutely insane and horrifying, horrifying, horrifying. I can’t believe how poorly this was built in the first place. Improvements are being held back by the obstacles that have been put in front of us,” one user wrote. writing. . “I would fire [redacted name of software] Even if it was my choice, just because of this absurdity. This is wrong. “

In addition to candid words and credentials, the leaked conversations include the name of the app the employee is troubleshooting and the number of the store where the issue occurred.

The entire conversation goes far beyond what is shown in the edited screenshot above. A link posted by Ars reader Chase Whiteside showed the entire chat conversation. The URL disclosed an additional pair of credentials.

The results were published on Monday morning, shortly after reader Whiteside used ChatGPT for an unrelated query.

“I went to write a query (in this case, they helped me come up with a fancy name for the colors in the palette), and when I came back a while later, I found more conversation. I am aware that this is being done,” Whiteside wrote in an email. “When I used ChatGPT last night, it wasn’t there (I’m a pretty heavy user). No queries were made, they just appeared in my history, and they definitely came from me. (I don’t think it’s even from the same user).”

Other conversations leaked to Whiteside include the names of presentations someone was working on, details of unpublished research proposals, and scripts using the PHP programming language. The users of each leaked conversation were different and appeared to be unrelated to each other. The conversation about the prescription portal included his 2020. No dates appeared in other conversations.

This episode and others like it highlight the wisdom of removing as much personal information as possible from queries to ChatGPT and other AI services. Last March, ChatGPT creator OpenAI took its AI chatbot offline after the site was taken offline due to a bug. Show title From one active user’s chat history to unrelated users.

In November, researchers published the following paper: paper Report how ChatGPT used queries to leak email addresses, phone and fax numbers, physical addresses, and other personal data contained in materials used to train ChatGPT’s large-scale language models doing.

Concerned about the potential for leaking proprietary and personal data, companies including Apple restrict their employees’ use of ChatGPT and similar sites.

As we noted in a December article in which multiple people discovered Ubiquity’s UniFy devices were broadcasting private videos of unrelated users, this type of experience is as old as the internet. As explained in the article:

The exact root cause of this type of system failure varies from incident to incident, but often involves a “middlebox” device located between the front-end and back-end devices. To improve performance, the middlebox caches certain data, including the credentials of recently logged-in users. If a mismatch occurs, credentials from one account may be mapped to another account.

An OpenAI representative said the company is investigating the report.