After Meta began labeling images with “made with AI” in May, photographers complained that the social media company was applying the labels to real photos where some basic editing tools were used.

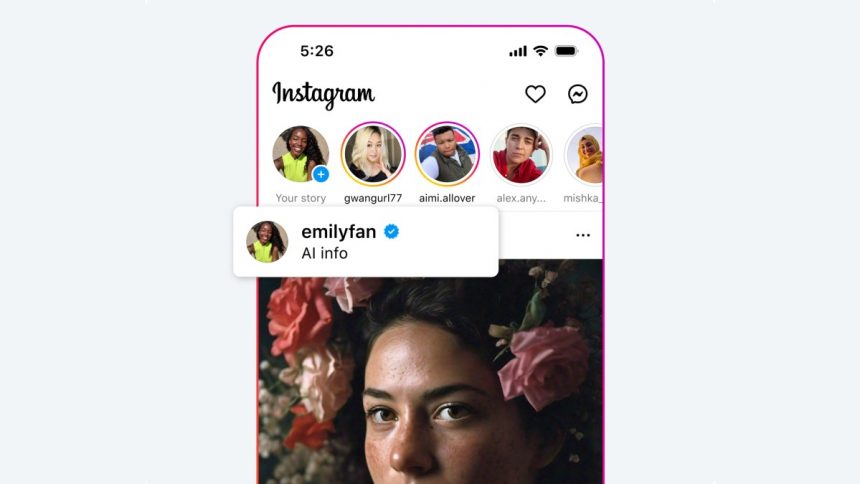

Due to user feedback and general confusion about the level of AI used in the image, the company has changed the label to “AI Information” across all Meta apps.

Meta said the previous version of the label wasn’t clear enough for users to point out that the labeled image wasn’t necessarily generated using AI, but may have used AI-powered tools in the editing process.

“Like others across the industry, we found that our labels based on these indicators didn’t always match people’s expectations and didn’t always provide enough context. For example, some content that included minor AI edits, such as editing tools, included industry-standard indicators that were then labeled “made with AI.” The post has been updated..

The company is not changing the underlying technology used to detect and tag AI in images. Meta still uses information from technical metadata standards such as C2BA And International Information Technology Conference Which includes information about the use of artificial intelligence tools.

This means that if photographers use tools like Adobe’s Generative AI Fill to remove objects, their photos may still carry the new label. However, Meta hopes the new label will help people understand that a labeled image isn’t always entirely generated by AI.

“‘AI Info’ can include content that was created and/or modified using AI, so the hope is that this will be more in line with people’s expectations, as we work with companies across the industry to improve the process,” Meta spokesperson Kate McLaughlin told TechCrunch via email.

The new label won’t solve the problem of images generated entirely by AI going undetected. Nor will it tell users how much AI editing has been done to the image.

Meta and other social networks will need to work on guidelines that aren’t unfair to photographers who haven’t made adjustments to their editing workflows, but whose tools for retouching images contain some elements of generative AI. On the other hand, companies like Adobe should warn photographers that when they use a particular tool, their images could be flagged on other services.