New, unedited version The lawsuit against Meta alleges a troubling pattern of deception and belittlement of how the company treats children under 13 on its platforms. Internal documents appear to show that the company’s approach to this apparently taboo demographic is much more grounded than it publicly claims.

The lawsuit filed last month alleges widespread harmful practices at the company regarding the health and well-being of the youth who use it. From body image to bullying, from invasion of privacy to maximization of engagement, all the alleged evils of social media are laid at Meta’s door – perhaps rightly, but also giving the appearance of a lack of focus.

However, in at least one respect, the documents obtained by prosecutors in 42 states are quite specific, “and they are devastating,” in the words of Attorney General Rob Bonta of California. That’s in paragraphs 642 through 835, which mostly document violations of the Children’s Online Privacy Protection Act, or COPPA. This law created very specific restrictions on young people online, limiting data collection and requiring things like parental consent for various actions, but a lot of tech companies seem to see it as more of a suggestion than a requirement.

You know this is bad news for the company when you ask for pages and pages of redaction:

Image credits: TechCrunch/42AG

This recently happened with Amazon as well, and it turns out they were trying to hide the existence of a price gouging algorithm that was siphoning off billions from consumers. But it’s much worse when you file COPPA complaints.

“We are very optimistic and confident in our COPPA allegations. Meta takes steps that intentionally harm children and lies about them,” AG Bonta told TechCrunch in an interview. “In the unredacted complaint, we see that Meta knew its social media platforms were being used by Millions of children under the age of 13 have their personal information illegally collected. It shows the common practice where Meta says one thing publicly and faces comments to Congress and other regulators, while internally she says another.

“Mita did not obtain — or even attempt to obtain — verifiable parental consent before collecting children’s personal information on Instagram and Facebook…but Mita’s own records reveal that she had actual knowledge of this,” the lawsuit says.

Instagram and Facebook target children and successfully enroll them as users.

Essentially, while the problem of identifying child accounts created in violation of platform rules is certainly a difficult one, Meta allegedly chose to turn a blind eye for years rather than enact stricter rules that would necessarily impact user numbers.

For its part, Meta said the lawsuit “distorts our work by using selective quotes and cherry-picked documents… We have procedures in place to remove these quotes.” [i.e. under-13] Accounts when we get to know them. However, verifying people’s age online is a complex challenge in this field.

Here are some of the most striking parts of the suit. While some of these allegations relate to practices from years ago, keep in mind that Meta (then Facebook) had been publicly saying it didn’t allow kids on the platform, and worked hard to detect and kick them out, for a decade.

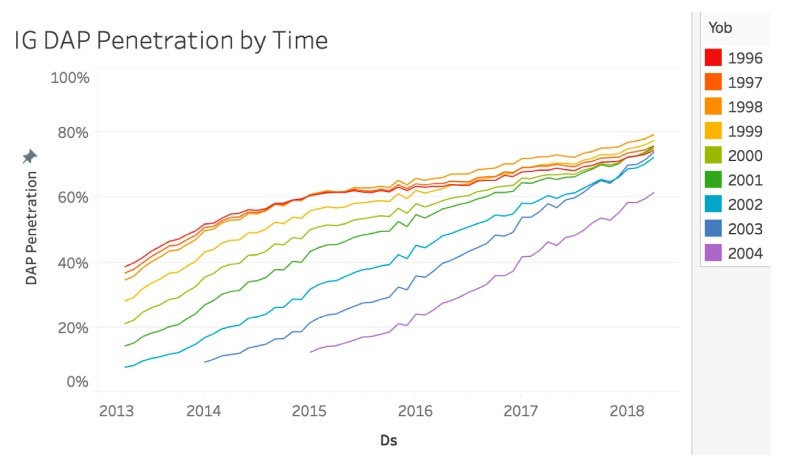

Meta has been tracking and documenting people under the age of 13, or U13s, internally in its audience segments for years. Charts are also shown in the filing. In 2018, for example, it noted that 20% of 12-year-olds on Instagram used it daily. This wasn’t a presentation on how to remove them – this was about market penetration. Another graph shows “Meta’s knowledge that 20-60% of 11-13 year old users in certain birth cohorts actively used Instagram on at least a monthly basis.”

A newly unrevised chart shows that Meta is tracking users under 13 very closely.

It is difficult to reconcile this with the general attitude that users are not welcoming in this day and age. And not because the leadership was not aware of this.

In the same year, 2018, CEO Mark Zuckerberg received a report stating that There were approximately 4 million people under the age of 13 on Instagram in 2015, which amounts to approx. One-third of all children ages 10 to 12 in the United StatesThey appreciated it. These numbers are clearly outdated, but they are surprising nonetheless. Meta has never, to our knowledge, acknowledged the presence of such huge numbers and proportions of users under the age of 13 on its platforms.

Not externally at least. Internally, the numbers appear to be well documented. For example, as the lawsuit claims:

Meta has data from 2020 indicating that of the 3,989 children surveyed, 31% of 6- to 9-year-olds and 44% of 10- to 12-year-olds used Facebook.

It’s difficult to extrapolate the numbers from 2015, 2020, and today’s numbers (which, as we’ve seen from the evidence presented here, certainly won’t be the whole story), but Bonta pointed out that the big numbers were presented for effect, not as legal. justification.

“The basic premise remains that millions of children under the age of 13 are using their own social media platforms. Whether that’s 30 percent, 20 percent, 10 percent… any child, it’s illegal,” he added. “If they were ever doing that, it would violate the law at that time. We’re not sure they’ve changed their ways.”

An internal presentation called “Strategic Focus for Teens 2017” appeared to specifically target children under 13, noting that children are using tablets as early as 3 or 4 years old, and “social identity is an unmet need for ages.” From 5 to 11 years old. One stated goal, according to the lawsuit, was precisely that “Growing [Monthly Active People], [Daily Active People] and time spent among children under 13 years of age.

It is important to note here that although Meta does not allow accounts to be managed by people under the age of 13, there are many ways in which it can legally and safely engage with this demographic. Some kids just want to watch Spongebob Official videos, and that’s okay. However, Meta must verify parental consent and the ways in which it can collect and use their data are limited.

But the revisions indicate that these users under the age of 13 are not the type to participate legally and safely. Reports on underage accounts are reported to be automatically ignored, and Meta “continues to collect the child’s personal information if there are no photos associated with the account.” Of the 402,000 reports of accounts owned by users under 13 in 2021, fewer than 164,000 accounts were disabled. These measures are said to not cross-platform, meaning that the Instagram account being disabled does not indicate accounts linked or linked to Facebook or other accounts.

Zuckerberg testified before Congress in March 2021 that “if we find out that someone might be under 13, even if they lied, we fire them.” (“They lie about this a lot,” a research director said in another quote.) But documents from the following month cited by the lawsuit indicate that “age verification (for under-13s) has a significant backlog and demand is outstripping supply.” Because of absence [staffing] capacity.” How big is the backlog? Sometimes, the lawsuit claims, millions of accounts are ordered.

Potentially damning evidence was found in a series of anecdotes written by researchers at Meta, carefully avoiding the possibility of inadvertently ascertaining the under-13 group in their work.

“We just want to make sure we’re sensitive about some Instagram stuff,” one wrote in 2018. For example, will the survey include people under the age of 13? Since everyone must be at least 13 years old before creating an account, We want to be careful about sharing findings that indicate children under 13 are being bullied on the platform.

In 2021, another, who studies “sexual content/behavior/interactions between children and adults” (!), said she was “not included.”[ing] “The younger kids (10-12) in this research” although “there are definitely kids of this age on IG,” because it was “We’re concerned about the risk of exposure because it’s not supposed to be on IG at all.”

Also in 2021, Meta instructed a third-party research company that surveys teens to remove any information indicating the survey subject’s presence on Instagram, Therefore, “the company will not be aware of those under the age of 13.”

Later that year, outside researchers provided Meta with information that… “Of children ages 9 to 12, 45% use Facebook and 40% use Instagram daily.”

During a 2021 internal study of youth in social media described in the lawsuit, they first asked parents whether their children used meta platforms and removed them from the study if so. But one researcher asked: “What happens to kids who go through the screening screen and then say they’re on IG during interviews?” Instagram’s head of public policy, Karina Newton, responded, saying: “We don’t collect usernames, do we?” In other words, nothing happens.

As the lawsuit says:

Even when Mita learns about specific children on Instagram through interviews with children, Mita takes the position that she still lacks actual knowledge that she is collecting personal information from a user under 13 because she does not collect usernames while conducting these interviews. In this way, Meta goes to great lengths to meaningfully avoid compliance with COPPA, looking for loopholes to exempt its knowledge of users under 13 and maintain their presence on the platform.

Other complaints in the lengthy lawsuit have softer sides, such as the argument that platform use contributes to poor body image and that Meta failed to take appropriate measures. Arguably, this is not doable. But the COPPA stuff is much more cut and dry.

“We have evidence of parents sending them feedback about their child’s presence on their platform, and they’re not receiving any action. I mean, what more could you need? It shouldn’t even get to this point,” Bonta said.

He continued: “These social media platforms can do anything you want.” “It can be run through a different algorithm, it can have plastic surgery filters or it can’t have it, it can give you alerts in the middle of the night or during school, or it doesn’t. They’ve chosen to do things that increase the frequency of use of that platform by kids, and the duration of that use.” They could end it all today if they wanted to, and they could easily block those under the age of 13 from accessing their platform. But they’re not.

You can read the mostly unredacted complaint here.

TechCrunch has contacted Meta for comment on the lawsuit and some of these specific allegations, and we will update this post if we receive a response.