Microsoft announced Monday that it has taken steps to fix an apparent security mistake that led to the leak of 38 terabytes of personal data.

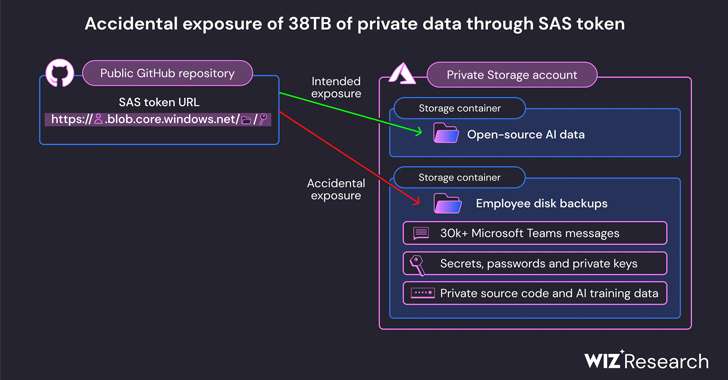

Wiz said the leak was discovered in the company’s AI GitHub repository and was allegedly accidentally published while publishing a bucket of open source training data. Disk backups of two of the former employee’s workstations also contained sensitive information, keys, passwords, and over 30,000 of his internal Teams messages.

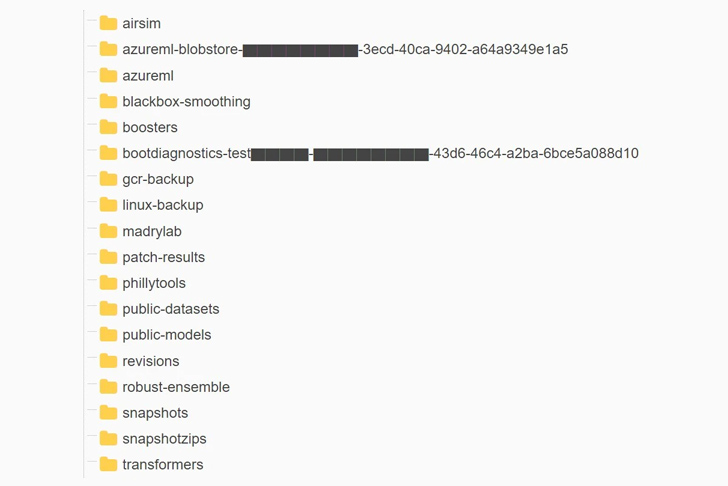

Repository named ”Robust model transfer‘ is no longer accessible. It featured source code and machine learning models related to it before it was removed. 2020 research papers of the title “Is forwarding adversarially robust ImageNet models better?”

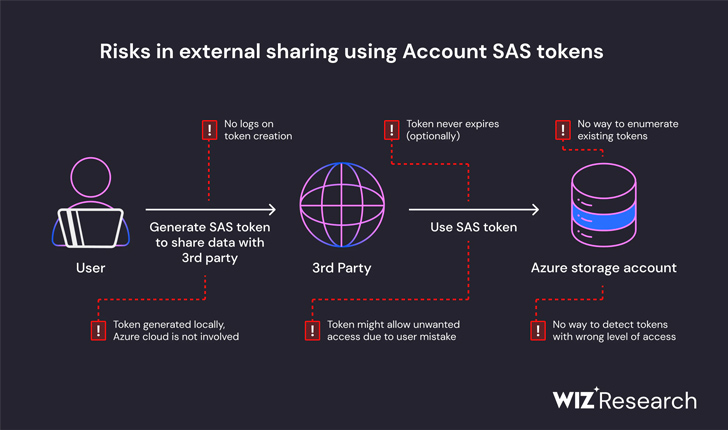

“This revelation happened as a result of an overly permissive policy.” SAS token – is a feature of Azure that allows users to share data in a way that is difficult to track and undo,” Wiz said. Said In the report. This issue was reported to Microsoft on June 22, 2023.

Specifically, the repository’s README.md file instructed developers to download models from an Azure Storage URL, but it also inadvertently granted access to the entire storage account, resulting in additional private The data has been made public.

Wiz researchers Hirai Ben Sasson and Ronnie Greenberg wrote, “In addition to being overly permissive in scope of access, the token now grants ‘full control’ privileges rather than read-only. He said it was configured incorrectly. “This means that an attacker can not only view all files in your storage account, but also delete or overwrite existing files.”

Based on the results of this investigation, Microsoft Said The investigation found no evidence that customer data was compromised and said “no other internal services were compromised as a result of this issue.” He also emphasized that there is no need for customers to take any action.

The Windows maker further noted that the SAS token has been revoked and all external access to the storage account has been blocked. The matter was resolved two years after his responsible disclosure.

To mitigate this risk in the future, the company expanded its secret scanning service to include SAS tokens, which may have overly lenient expiration dates and privileges. It also said it had identified a bug in its scanning system that flagged certain SAS URLs in the repository as false positives.

The researchers said, “Account SAS tokens lack security and governance and should be considered as sensitive as the account key itself.” “Therefore, we strongly recommend that you do not use account SAS for external sharing. Mistakes in token creation can easily go unnoticed and potentially expose sensitive data.”

Identity is the new endpoint: Mastering modern SaaS security

Take a deep dive into the future of SaaS security with Maor Bin, CEO of Adaptive Shield. Understand why identity is the new endpoint. Reserve your spot now.

This is not the first time a misconfiguration of an Azure storage account has been revealed. July 2022, JUMPSEC Labs highlighted A scenario where a threat actor can leverage such an account to gain access to a company’s on-premises environment.

The development is the latest security failure at Microsoft, with China-based hackers infiltrating the company’s systems by compromising an engineer’s corporate account and possibly accessing crash dumps, which are highly sensitive. This comes nearly two weeks after the company revealed that it was able to steal signing keys. Consumer signature system.

“AI unlocks huge potential for technology companies. But as data scientists and engineers race to bring new AI solutions into production, the large amounts of data they work with require additional security checks and safeguards. ,” said Ami Luttwak, CTO and co-founder of Wiz. statement.

“This emerging technology requires large datasets for training. Many development teams need to work with large amounts of data, share it with colleagues, and collaborate on public open source projects. This makes it increasingly difficult to monitor and avoid cases like Microsoft.”